Thank you very much, changing to ProRes fixed it, thanks!!!

Hi Dakota—following up on your earlier comment:

“Lowering VRAM will lower the model quality, just so you’re aware.”

Could you clarify what this entails?

I’m operating a 4080 Super with the VRAM usage slider set to approximately 74% in Preferences. Does this setting directly reduce the model’s fidelity, even with around 4 GB of headroom remaining? Or are quality reductions only triggered below a specific internal threshold, making 74% still safe for full-quality output?

Your comment here, along with a related unanswered thread during Beta 2—where a user inquired if higher VRAM enables “bigger models” akin to LLMs—suggests that the model dynamically scales quality. However, this hasn’t been clearly explained.

This would greatly assist users with high-end GPUs like the 4080, 4090, and 5090, who assume that 70–80% VRAM usage equates to full model quality.

We’d appreciate a direct answer—ideally more than “performance may vary.”

Thanks.

Hi, caused by the watermark ![]()

Quite an undervolting. ![]()

I’m still on the curve-undervolted RTX 3090 and I’ve managed to get my hands on 5080 FE, yet the smaller amount of VRAM stops me from upgrading. ![]()

OUCH. ![]()

I think this question triggers the “secret” about

But for what i understand, the vram is “responsible” to understand the frame sequence and keep the details obtained from the video

Utilizing 100% GPU capacity may significantly enhance frame buffering, improving the model’s comprehension. For instance, if a low-resolution video initially shows a girl, and later provides a close-up, the model could leverage this improved detail to enhance facial features retrospectively. And again, that’s why the cloud model can make a better “quality”, cause they can “see” more frames

I had problems with codec settings, with p7 errors, but changing to ProRes instead of H265/H264/AV1 fixed the problem. Now processing starts without errors.

HI TOPAZ SUPPORT

I get the error message:

- Error setting option preset to value p6.

I analyzed the whole thing and looked at the log files, and found out something:

The error:

[h264_nvenc @ …] Unable to parse option value “p6”

[h264_nvenc @ …] Error setting option preset to value p6.

In the log files, I was able to trace the affected command line, which is passed internally to ffmpeg by Topaz:

–ffmpeg-encoding -c:v h264_nvenc -profile:v high -pix_fmt yuv420p -g 30 -rc cbr -b:v 10M -preset p6 …

The preset p6 is valid and available in FFmpeg 7.1.

Therefore, the error is not related to the FFmpeg version or NVIDIA driver, but rather appears to be caused by how the runner.exe process constructs or parses the FFmpeg command line internally.

In my case, the log shows the following being passed:

--ffmpeg-encoding -c:v h264_nvenc ... -preset p6 ...

The error suggests a possible parsing issue, malformed quoting, or argument splitting problem during this internal call.

Thanks, but it seems that is happening to other users not on the trial version…

I’ve heard that Topaz Gigapixel AI has a very good enlargement model, but I wonder what the difference is in terms of time required and video quality between taking a still image from a video and enlarging it with TGAI versus using Starlight Mini?

Has anyone tried it?

I FOUND THE SOLUTION:

When using Topaz Video AI 7.0, I encountered this error on export:

Error setting option preset to value p6

Initially, I assumed the issue was due to an invalid preset or an outdated internal FFmpeg version. However, after detailed testing, I discovered the actual caus

Root Cause

If the Windows system has an older version of FFmpeg installed and registered via the PATH environment variable, Topaz Video AI does not consistently use its own bundled ffmpeg.exe. Instead, it sometimes calls the system-wide FFmpeg, which may lack support for presets like p6.

Verified Fix

After replacing my system’s ffmpeg.exe (located at C:\Program Files\ffmpeg\bin\ffmpeg.exe) with the latest official FFmpeg 7.1 build (which supports p6), the error disappeared and exports worked flawlessly.

This confirms that:

Topaz Video AI relies on the PATH-resolved ffmpeg.exe if one exists – even though an internal version is available.

To avoid this problem and make Topaz Video AI more robust, I suggest the following:

- Always call FFmpeg using an absolute path, pointing to your bundled version (e.g.):

C:\Program Files\Topaz Labs LLC\Topaz Video AI\ffmpeg.exe

- Avoid generic calls like

ffmpeg ..., which are resolved viaPATH, leading to unpredictable behavior depending on the user’s system. - Optionally: Add a version check or warning if the detected

ffmpeg.exeis not the intended internal build.

I hope this helps you improve the stability of the application. Let me know if I can provide additional information or testing.

hi. for me i found the solution

In my case, Topaz was using an outdated version of ffmpeg that I still had on my PC. It seems as if they don’t force their program to use the version they shipped with it.

Try the following:

cmd

then type:

where ffmpeg

You’ll probably see a path where you still have ffmpeg. If you then go to the folder with CD and type

ffmpeg.exe -version

you’ll see that it’s an older version. I think ffmpeg only supported the “p” parameter since version 7.

Download the latest version from

and replace the ffmpeg.exe on your PC. It should work.

Topaz AI just needs to ensure that they 100% use their own ffmpeg in the future.

It worked for me that way.

The reason why we need a video model is that we need temporal consistency. The individual images from an image model can not contribute a temporally consistent result.

This worked for me too.

I tried and it’s bad. The face change so much during the video clip. The problem is the consistency between frames, ans even in further scenes. Starlight learn what is what, and knows where replicate the face, the hair, en even the objects. What we can tell is, Starlight can really learn, hold that information and replicate when it needs. Can remaining details and going back and forth between the original frame and multiple ones

Are you guessing, or is this how the model works?

For example, if a video has a scene with the character in the background and also a scene where the character is close to the camera, will this ai take the face data from the closeup and use it to regenerate the smaller face in better detail?

This kind of process is what I’ve always wanted.

Also I’m wondering, does it still look at the entire video even if you are only upscaling the beginning? If I choose to upscale the first 10 seconds of a video, is the model looking for a larger face only in those 10 seconds, or is it looking at the whole video, even though I’m not processing the whole video?

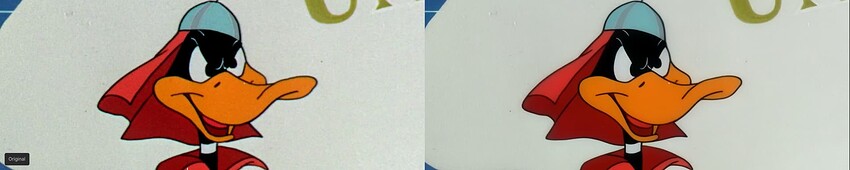

There does appear to be some color shift or darkening happening. Look at the background. I also took the image into photoshop and the right side colors are just slightly darker like the orange and the red.

I too thought so, but now I realize it is not so.

I just installed 7.0 and every time I try to render something using Starlight Mini, the render errors out. Every other model works fine. I’m on windows 10 with an rtx4070, 9700x.

They can’t tell if I’m wrong or right because it’s the patent of the Starlight model, so it’s a secret. But in my tests, I used some trick videos, and the conclusion is very clear. They have a window of frames that the model examines; after that, the model starts imagining things from noise and compares them to the original frame. When it examines the frames, if it finds a better face at another time in the same video, it will use it to reconstruct the character, object, or style. In engineering terms, the larger the video, the more the model will know about it. Longer videos have better output. Why? Because they analyze all the frames and collect a lot of information.

Again, Topaz will not confirm if this is correct, but my guess is it’s not from nowhere. With all the information here, and with what we have today, the model has several steps.

- The system processes a window of video frames, analyzing them to differentiate noise, moving objects, static elements, and consistent background features. This analysis informs the generation of synthetic frames from scratch, which are then compared to the original video for refinement. The processed frames within the window are delivered to the user, and the process iterates with the enhanced contextual information from the preceding window