It looks like CPU performance matters much more than GPU performance, and Intel CPU platform seems to have better overall performance than AMD platform, even though GPU acceleration is enabled.

@charoldpctech got Ryzen 5 3600 with RTX 3060Ti, and the performance was around 0.36~0.4s/frame.

@ahilecostas got i7 9700F all cores at 4.5GHz with RX480 8G, and the performance was around 0.14~0.2s/frame.

@jasonkeopka got Ryzen 9 3900X at stock clock speed with 6800XT, and the performance depending on different Memory and Fabric speed, was around 0.25~0.38s/frame.

I also gave it a little bit try on my several PCs and laptop ( VEAI 1.8.1 trial version):

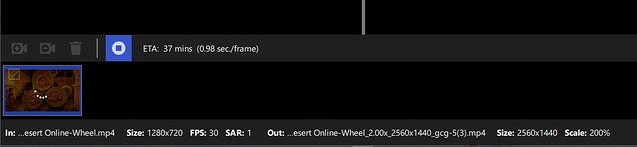

Software settings: Artemis LQ, 200%, highest vram usage, no reduce GPU load, model download enabled.

-

Intel 7980XE (18-cores) all cores at 4.6GHz, 4000MHz memory speed, RTX 3090 at 2100MHz GPU and 10000MHz VRAM: around 0.12~0.17s/frame.

-

Intel 6950X (10-cores) all cores at 4.3GHz, 3200MHz memory speed, RTX 3090 at 2000MHz GPU and 10000MHz VRAM: around 0.16~0.2s/frame.

-

AMD Ryzen 5 3600X (6-cores) all cores at 4.3GHz, 3266MHz memory speed (synced Fabric speed) , RX580 8GB at 1350MHz GPU and VRAM stock speed, around 0.5~0.56s/frame.

-

Intel 8809G (Intel Hades Cayon NUC, 4-cores, power limit unlocked) all cores at 4.5GHz, 3200MHz memory speed, RTX 2080Ti (connected to machine with a Razer Core X Thunderbolt 3 eGPU box) at 2100MHz GPU and 8500MHz VRAM, around 0.3~0.35s/frame.

-

Intel 8750H (ThinkPad X1 Extreme Gen1, 6-cores) all cores at 3.9GHz, 2666MHz memory speed, GTX 1050Ti Max-Q at stock speed, around 1.9~1.95s/frame.

The poor performance of the ThinkPad could be the overall power limit of the laptop, it made the CPU usage kept at around 20%, and ThrottleStop software indicated the CPU power would hit the power limited at only 15W when VEAI was running. I guess the result would be much better with an external GPU as the CPU power alone could be unlocked by Intel XTU and kept at 65~70W running CineBench.

Other 4 PCs, 7980XE at 4.6GHz got the best performance along with much higher CPU power consumption, 6950X at 4.3GHz was the 2nd.

Hades Cayon NUC was surprisingly the 3rd fastest one with an external GPU, even though it got less CPU cores than Ryzen 5 3600X, but with a little higher all cores clock speed.

Another unusual thing I’ve noticed, is that the CPU usage on Intel CPU usually kept at 45~60%, while on my Ryzen 5 3600X platform, it only kept at around 25%~30%, almost only a half compared to Intel platforms.

On the other hand, with Ryzen 5 and RX580 8G, the GPU usage kept at almost 100%, but on Intel platforms with RTX 3090, GPU usage only kept at around 15% more or less.