Perhaps Avisynth script is also choice, using CLI or Hybrid Vaporsynth “FineSharpen” does good job. When doing upscaling Spline64 use gives also sharpness when something is too smooth

Is it possible to re-download @ reinstall the Starlight Mini package from within the program? Otherwise, the only solution is to remove the program with everything from registry for the new installation to do it. ![]()

Please please do not add sharpening to the Starlight Mini model… Some of us want the results to look natural and realistic!

I think sharpening would be fine when its optional, everyone can decide to resharpen or not

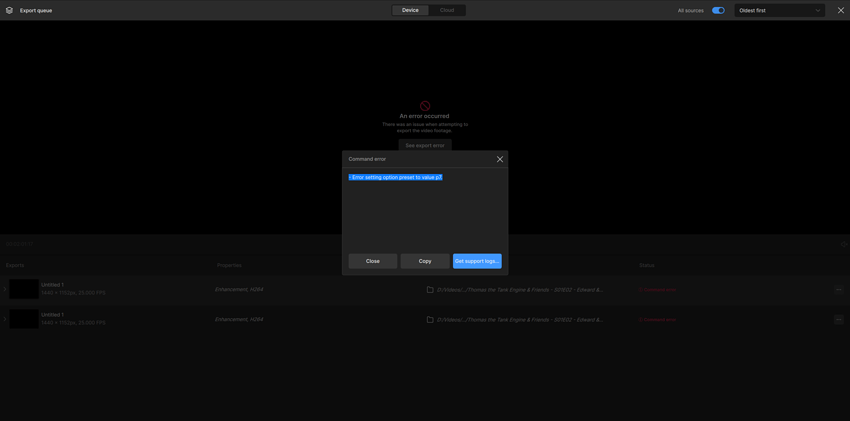

When I run it on starlight mini, an error occurs immediately and it doesn’t run.

It runs normally if I select another output resolution and then go back to the original selection.

I noticed when I scaled footage up to 720p it was quite soft and the same footage to 1080p was far sharper. One pass on each, I don’t mean multipass. I will say even the soft footage was quite spectactular even though we have all come to expect more. If I had seen “denoising” software that did what the soft version did, even just ten years ago, I really don’t think I would have believed my eyes.

The more I use Mini the more impressed I become. I think Topaz has outdone themselves, but I hope they keep it moving and improving here. If they do that, they will remain unsurpassed for years to come, likely more. Not three months ago I was very worried about Topaz, but with some attention to text and textures, Mini has the potential to cement them as the absolute platinum standard for upscaling and artifact correction.

I agree with this. It should probably be an option. I haven’t done enough testing to have a preference yet.

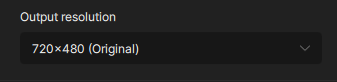

Hallo Canax, das liegt wahrscheinlich an den resolution settings im Enhancement panel. Nur x1 (oder x2 und dann zurück zu x1) sollte man anwenden. Für en 4:3 Video (Beispiel eine alte VHS Cassette 720x576) muss der output dann bei 1280x960 sein. Falls du andere resolution parameters benutzt hat (zum Beispiel ‘upscale to FHD’), kommen viel höhere Zahlen in die Enddatei, und das erhöht erheblich die Render Zeit, wie 0.1 fps oder 0.2 fps in deinem Beispiel. Ich habe am Anfang den selben Fehler gemacht und ich hatte 0.2 fps. Jetzt, wenn ich nur x1 oder x2 aussuche (du musst dann aber zurück zu x1, es gibt noch bugs), habe ich 0.7 fps.

Um es bildlich zusammenzufassen :

Hier immer Original Grösse anklicken, und falls du en error bekommst, auf x2 clicken und dann zurück auf Original. Viel Glück !

All feedback related to starlight mini

- Seems like it throws errors with portrait rotated iphone video

- Will selecting a smaller time range for testing be added? Like if we want to test it on a 10 second piece of the video without doing an separate clip first?

- it seems to sometimes think the video original resolution is 720p when it is actually less (selecting a model other than starlight mini corrects the issue)

Very interesting, thank you. So you think to upscale your starlight rendering from 1280x960 to a more standard resolution (1440x1080 or 1920x1080), Rhea XL is a better option than classic Proteus or Artemis ?

I ran a few tests after reading your post, upscaling a Starlight Mini render from 1280x960 to 4:3 full HD, so 1440x1080. I tried Rhéa XL, Protéus and Protéus with a second enhancement Artemis. And you were right, Rhéa XL gives the better results. Only hiccup is arm hair from close, looks unnatural on Rhea, while Proteus kept it natural. But overall, it seems RhéXL is the way to go ! Thanks for letting us now ![]()

Sorry German ![]()

Die Frage ist ob 720x576 auch Pixelnativ auf 1280x960 hoch skaliert wird, oder TVAI einfach ein DAR-Wert setzt und in der Breite beim Abspielen auseinander gezogen wird, was ich nicht empfehle, da dieser Streckvorgang nicht Modellverbesssert ist.

Am besten mit MediaInfo das Resultat prüfen was TVAI macht, obes tatsächlich native 1280x960 Pixel sind, dennTVAI kann schlecht mit Anamorph umgehen. Ich persönlich strecke aufgrund schlechter Erfahrungen PAL 4:3 Material immer in Virtuadub oder Hybrid auf 768x576 oder auf 1024x576 für 16:9 und füttere TVAI damit.

Yes it seems to be RheaXL is made for Starlight footage what it does on pixel levels ![]() But RheaXL upscale also can produce artefacts, the model is more like beta. I recommend try also doing 1920x1440 Starlight and use this as footage for 1 x RheaXL. When you do no upscale with RheaXL, it delivers natural results without face disortions.

But RheaXL upscale also can produce artefacts, the model is more like beta. I recommend try also doing 1920x1440 Starlight and use this as footage for 1 x RheaXL. When you do no upscale with RheaXL, it delivers natural results without face disortions.

So far RheaXL 1x is longer on my radar, but I rarely used it because it generated worse jagged contours when doing 1x, but now it seems that with Starlight as template this is much less pronounced, so it could be an option now, give it a try ![]()

Title: Starlight Mini – Enhancement or Transformative Recreation?

After using Starlight Mini Beta 4 in depth—having worked with Topaz’s prior models since 2019/2020—I compiled an independent research paper examining whether this model is simply an upscaler… or something more generative in nature.

The paper introduces the idea of Transformative Recreation (TR), explores what makes Starlight Mini different from traditional enhancement, and outlines the legal, ethical, and creative implications based on public beta usage and observed behavior.

It’s based entirely on public-facing versions—no proprietary material. It’s intended for other testers, professionals, and creators navigating what this shift in AI-powered video restoration actually means.

Why does generative AI need a new name…?

That’s your ‘takeaway’? I couldn’t name it, it WAS named by others as far as I am aware - but you can keep that discussion to your internal self now hopefully.

Please add pause and resume for the Starlight Mini model.

I feel this is an essential feature due to the slow processing time of this model.

I don’t see much philosophical difference between the old models and this one, not even between traditional photography and videography or digital photography not supported by AI. Not even if we’re talking about analog chemical photography. All, absolutely all technologies involve an interpretation of reality. To begin with, we compress the three dimensions of space into two. Color is reinterpreted by a chemical emulsion or by algorithms. In every case, a large part of the image is captured out of focus due to the physical limitations of lenses and technologies. This curious “issue” is something we’ve become accustomed to and, in many fields, is even considered an “advantage”: the much-praised “bokeh.” And there are many other aspects we could consider.

So, to what extent, is the information in each image largely “invented” by technology? To what extent is everything, in a way, “generative”? Is our own eye-brain vision system generative as well? Perhaps it’s more a quantitative than a qualitative question. We’re doing what we’ve always done, but now we’re able to do it “better”? I don’t know. I’ll leave that for all of you to reflect on.