You still never mentioned the future memory requirements for Starlight/Astra on NVIDIA GPUs.

Will you try to keep it within 16 GB?

Will future versions run at full capacity on the RTX xx90 only?

The fact this needed to be shown to Topaz staff is shocking! It begs the question if nobody had screenshot it and it was just our words, would this issue even be fixed? What’s stopping it from happening again? And if staff can’t remember or known what the CEO told everyone in a public statement only 6 months ago does raise some eyebrows. Are they trying to give us less and less on purpose so they can build up pro users or is agreements and communication that bad? It’s also not the first time founders have had issues with using models.

Exactly! Does the word of the CEO now hold no weight? The USP of the founders license was literally local rendering for future “new models”, both for Photo & Video Apps. If @hi8upscale hadn’t called it out publicly, would the Topaz team have moved forward with this rugpull and never addressed the situation??

This is a serious breach of trust, and at minimum requires a public apology along with the new update (that would presumably grant the founders local access)!

Also when is “Stralight Precise 2.5” (from ASTRA) or its future variants going to come to TVAI?? Hopefully it won’t take 6 months again.

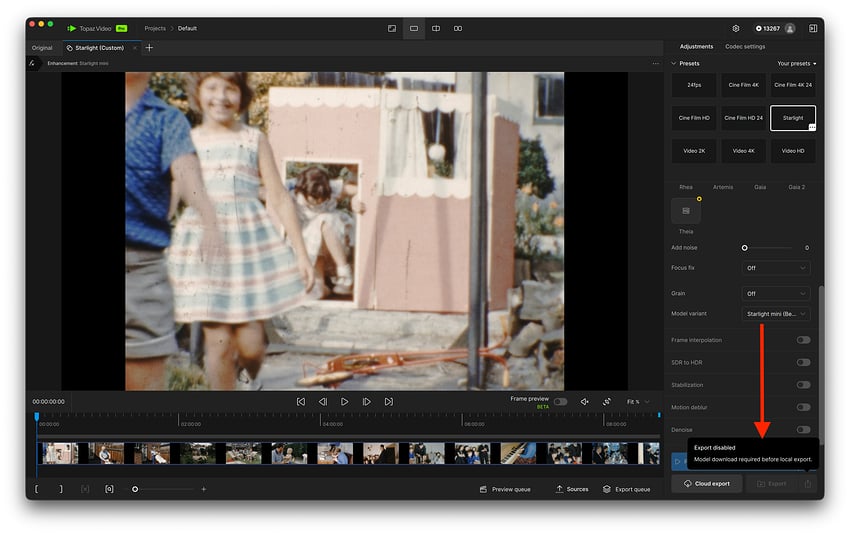

I also have a M5 Pro MacBook Pro. This also has the same issue. Asking me to download the model before I can use starlight mini.

Looks like Starlight Mini is no longer available to AMD GPUs locally?

Seems to me that you have unnecessarily complicated your lives with the need to maintain an extra license tier. How much revenue would the company really lose if you just converted Founders licenses to Pro licenses? It’s not as if we’re all going to suddenly start churning out $1M+ projects.

This would probably be the least complicated way forward. Transfer founders to the pro license, but keep the cost the same as what they are paying now. Then if you ever cancel your sub, you lose the special founder pro discount forever.

It works with 1.2.0 and 1.2.1, broken with 1.3.0. Topaz are working on a fix.

I am noticing the same thing.

I always copy the video I want to process on the local drive. Main reason for me is SMB is not reliable enough on macOS. Maybe a fraction of a % of the time I lose SMB connections, and waste hours or days of processing.

Unless you’ve created a typo, the answer is ‘Never’. You have seemingly referenced the legacy version - Topaz Video AI, which will receive only minimal support and no new features in the future.

Your question would only be relevant to Topaz Video. (The Studio version)

Yeah that’s what I meant, obv ![]()

video.zip (2.7 MB)

I’ve been running quality tests on the new Topaz update. Despite the faster processing speeds, it still struggles heavily with poor-quality source videos. It is faster, but to what end? I challenge the Topaz CEO himself to take this highly degraded video and restore it with this new version without introducing AI hallucinations. If he can pull that off, I’ll get a Topaz tattoo on my arm. I understand the current bottlenecks in AI restoration models, but the software still fails to deliver on its core promise to the consumer: genuinely restoring and preserving old memories.

the video is here, and anybody can try, the challenge is up to

I looked at your sample, and there is not much there to “restore.” I did a quick run using Starlight Sharp and two levels of Astra Creative, and this is probably the best you’re likely to get without using an AI model that lets you swap out faces in a video for better quality photos of the people.

test runs.zip (20.9 MB)

If even the naked eye can barely recognize faces and objects, then the only option is to invent things freely, and the result is AI garbage. My intention is to focus on bringing DVD-like or old VHS quality to FHD (or higher).

I would like more support from Topaz in this regard. Good quality is only available with Starlight 3x, and with the faces, you’re practically forcing us to go to 4K, which I don’t actually want.

Why weren’t the new models unlocked for Founders right away? There can’t be a technical reason if Pro users got them.

Nothing has happened since the release of SLM, so I’m looking forward to trying out local Starlight HQ and Fast, as promised, in a few days, right?! ![]()

What I don’t quite understand is why there are more and more models instead of improving existing ones. Instead of Starlight Fast and HQ, wouldn’t an improved Starlight SLM have sufficed? An SLM with two or three settings: Speed, Medium, and max. Quality.

The main thing that can never be hallucinated properly is a face. All other details in my opinion can be hallucinated to be close enough and you wouldn’t be able to tell. Maybe a mole or something is not there, but that’s not a huge concern to me.

If I upscale a video of me that includes my face, I know instantly (obviously) if it really looks like me or not. No ai model will ever be able to guess what I should look like if there isn’t enough data. There are only three ways this can ever be solved. Let me know if you can think of others.

-

You include the specific faces in the training data for the videos you are trying to upscale. (This isn’t feasible because topaz doesn’t have the right to do that, and they can’t train on every face on the planet). This won’t happen.

-

Probably the best way forward, the model first searches the video to see if there are any close up shots of the face where it can pull details from, then it applies those details to parts of the video where the model doesn’t have enough information to properly upscale the face (either it’s distorted for some reason or it’s in the background and very small and there’s no detail)

-

You upload high quality images/portraits of the face/faces in the video and tell the program who should have that face in the video. With multiple people this might be tricky, but ideally it would support a reasonable number of faces, maybe 20-30 or more in case you’re upscaling some type of wedding video or a video like that that has a lot of people in it.

Uploading images so the program can reconstruct them accordingly sounds great, but we’re unfortunately far from that.

What I meant was, why do I have to do it 3x instead of 2x to avoid zombie-like faces? Sure, Stable Diffusion does it better when scaled up. It’s just that with SeedVR you can downscale the source material and it still looks good, but not with Topaz like that.

That’s where I think they could start, and there’s also the Alien Font issue, which the open-source tool can handle. Okay, let’s wait and see, maybe the new models will be able to do that better.

I’ve done some quick tests on Gaia 2 and can definitely see it having a use in future iterations or as-is for select content, but as for version 1 I’m really not liking the amount of sharpening and lack of option to adjust it on a per file basis. I usually opt for more subtle enhancements as to avoid butchering smaller background details, so would very much like to see some customizability options for this model to at least tone down the settings from the current version. Currently this model doesn’t feel very conservative as it is intended to be.

so what is the purpose of talk about “restrore old video family” ?