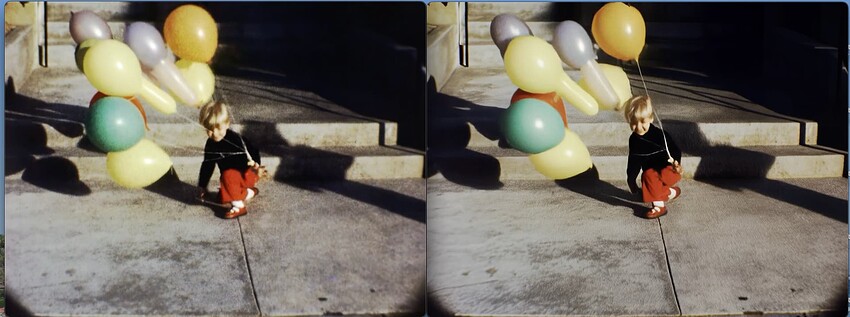

Super8 film enhanced by Proteus on the left and Starlight on the right

I gave a link in my next example. It’s much more pronounced and for a lot longer.

Yeah, that one needs a strength slider and a “recover original details” blend.

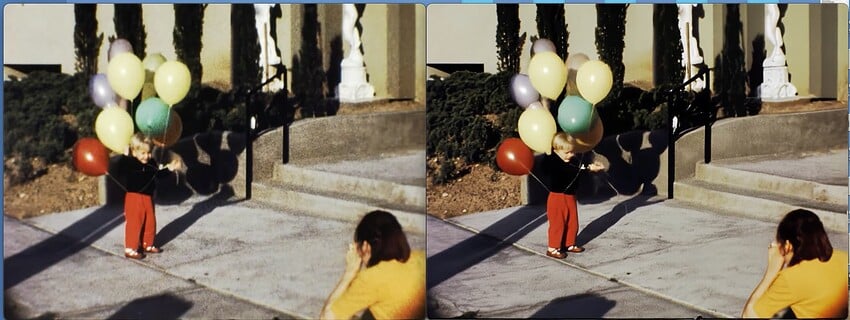

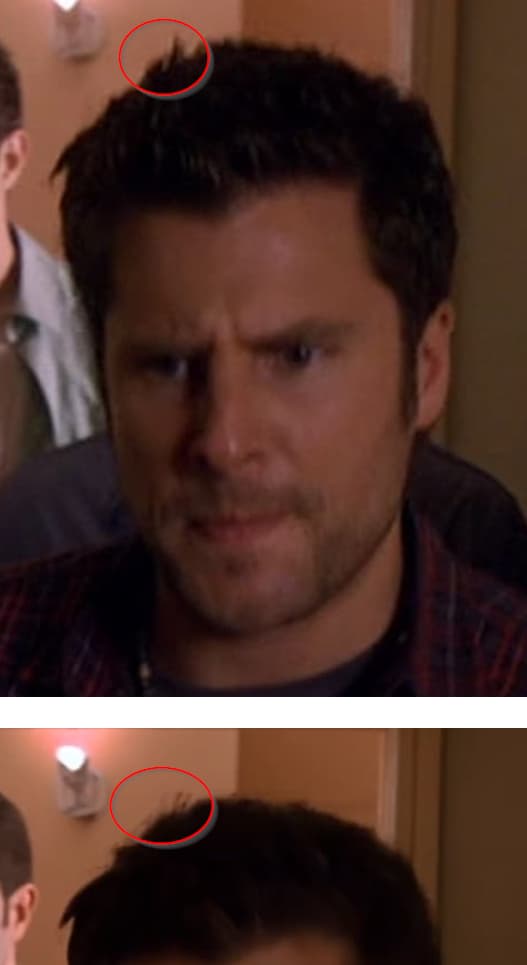

its not the same, different mouth shape and more clearly, the hair tuft of frontman is not the same…so maybe Starlight did a interpolation between two or more frames, or has simply invented something that does not exist.

Should I upload a video as interlaced or deinterlaced it first?

I think do best (very slow or placebo) QTGMC deinterlace first

One sample processed and the other two failed maybe because I mistakenly uploaded more than the time it was needed. But, the renders wasted like they worked (only one actually rendered out of the three)

I need to train myself to use fewer frames.

If Topaz is using the same style of AI diffusion that’s been so popular with images, it’s no surprise that little details are being changed. The model probably works by using the source as a mid point in the diffusion process.

Making up vivid details for far away objects in videos, is fine for an AI enhancing model—even expected. But doing that to closer, clearly discernible objects is not something people are going to love.

Anyway, I am hopeful that it is a step in the right direction. We’ll see.

I will be honest : There should not be a mandatory cloud computing model on a paid Topaz Video AI (installed on a computer).

There should be two versions :

-

Topaz Video AI for PC and Mac (199$) : On which all models should run locally (with the possibility to run them on the cloud) with annual paid updates which are game’s changer (like OpenAi and new models).

-

Topaz Video AI Cloud version (Free) : It can be used on a browser or with the software, but the use of local GPU is forbidden, only cloud rendering.

Your new strategy is counterproductive and will discourage your first supporters (like me). I understand that it’s a company who needs to make money, but without the support of the community, it won’t work as before.

I won’t pay twice ! Firstly to just open the software and secondly to use it with new paid models on the cloud.

The cloud usage cost should include access to the software. Buying or renewal of TVAI should guarantee full use of the program locally.

Sorry, english is not my native language.

I agree in principle, but if you do some browsing around, you’ll find that there are no video enhancement schemes based on a diffusion model that will run on local systems (there are some for still images). The resource requirements for diffusion-based video are probably too enormous to make them practical on anything less than server-level multi-GPU systems. So for the time being, Starlight is probably the most user-approachable diffusion video processor there is, anywhere.

If Topaz’s announced plan succeeds in producing a diffusion model that can be run, albeit at a snail’s pace, on local hardware, it will be a huge achievement.

You do, however, raise an interesting point. For people interested in diffusion processing for video and none of the other features of TVAI, it could be possible for Topaz to create free logins for the web app that provide access to the paid cloud processing only.

That will be live very soon (web app → paid renders via credits)

So no base fee for the login, just pay for the cloud credits?

Right

Beta 2 is out now ![]()