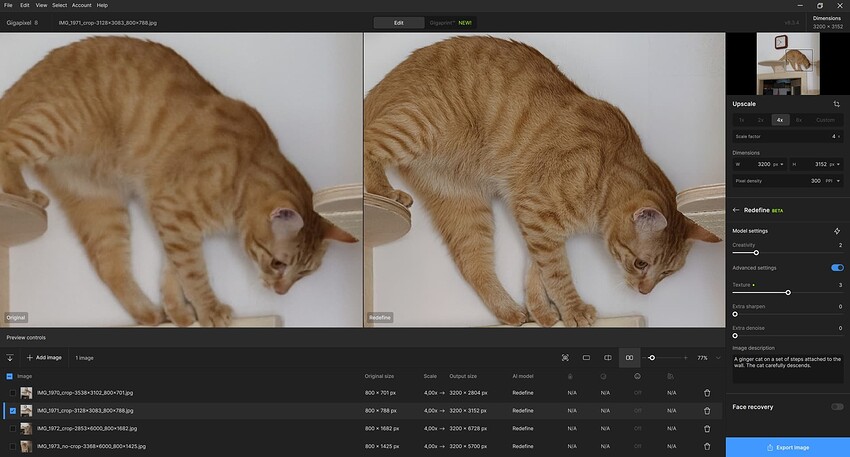

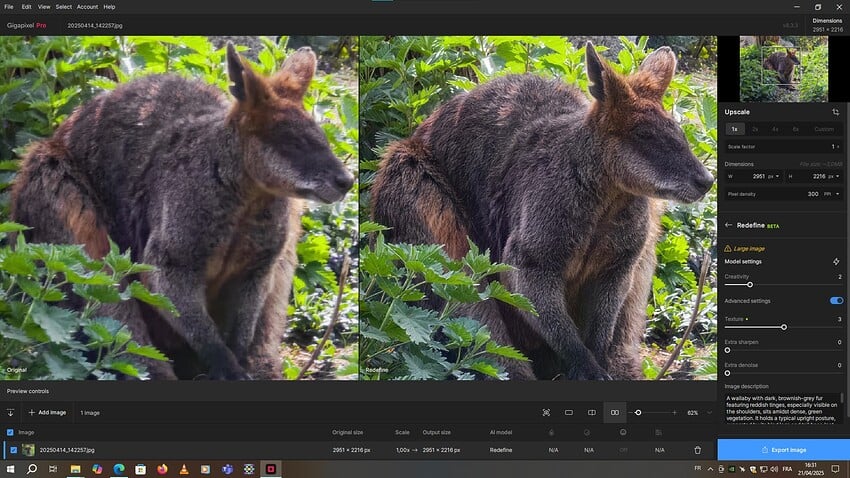

Reference Images and rendering variations with Redefine Settings

WARNING - A LONG POST WITH MANY IMAGES

This experiment was inspired by bas.evers and others

I agree with bas.evers ! I definitely like to test repeatedly in a similar way with one of “my” regular reference images. It makes it easier to compare both different Model Settings and different versions of the software. Building up a comprehensive memory of the same image helps to quickly spot differences between two or more renders. Hypothetically say there are 2 horses in an old historic print - perhaps with one version of Gigapixel you notice that for Creativity 1-2-3 they have 8 legs but with Creativity 4 they have 9 legs and perhaps with Creativity 6 they turn into 4 human figures with 7 or 8 legs. When a newer version of Gigapixel is released you have an immediate point of comparison by using that same old print image with 2 horses to check how many legs the new release renders. If you start testing the new release with a photo of chickens what do you compare the results with ?

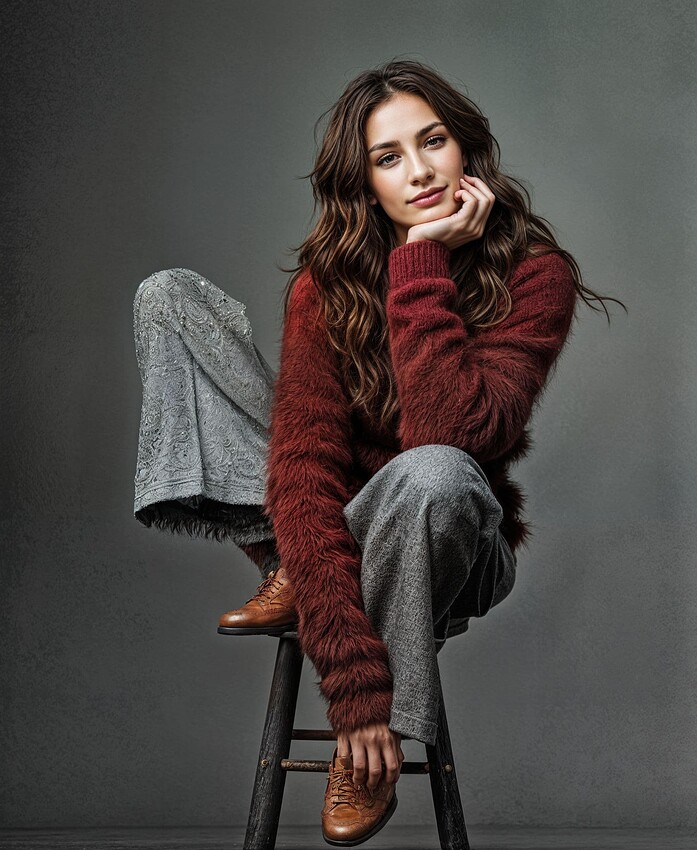

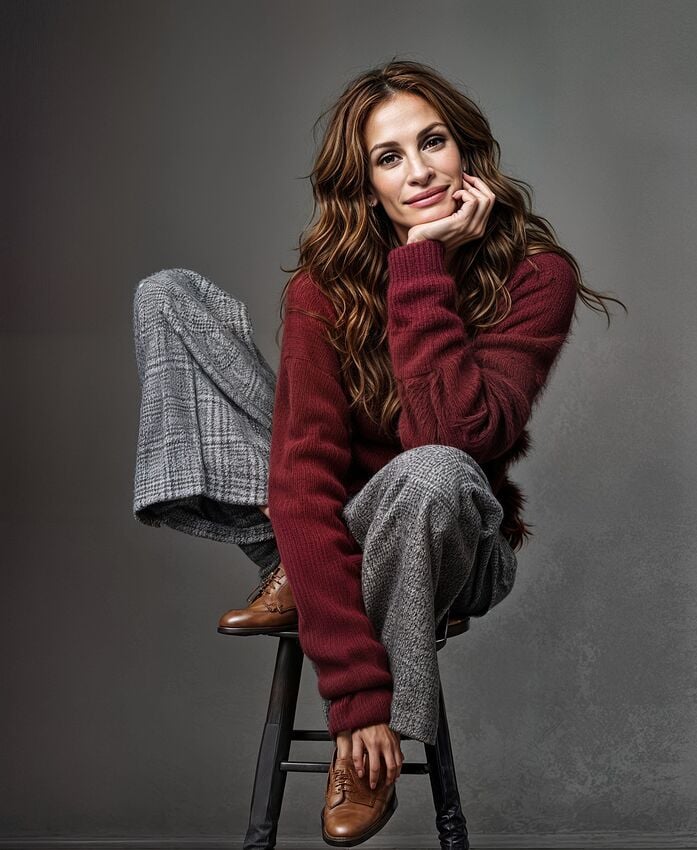

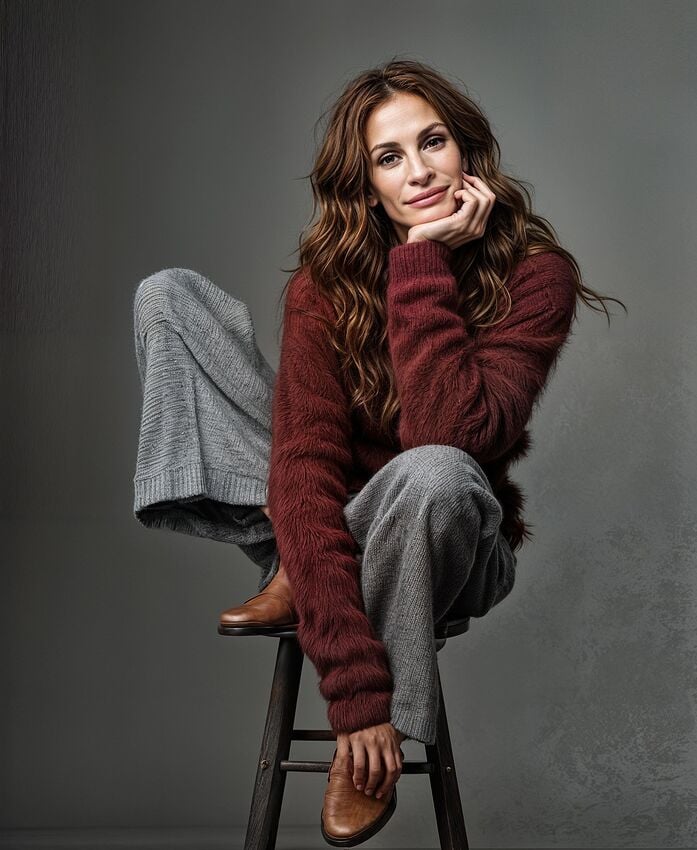

So I’ve added Julia Roberts to my standard image refence set! She is also easier on the eye than horses or chicken feathers ! And I never noticed her birthmark which now becomes a very useful “check point”.

Recently I have been investigating the use of text Prompts when using Redefine. In this post I will show examples of both Model Settings and Text Prompts. The Devil is in the detail - so inevitably there are a lot of renders to look at. If you are easily bored please don’t complain - just jump to the next post and stay cool.

Starting test image is borrowed from Bas. 900x1097px jpg - note the brown shoe detail

.

.

The first three sets of renders are upscaled by 2x with a few 3x at the end together with 2 Cloud .

Currently I’m using a 4 digit code for v8.3.4 such as C4321 - this means:

Creativity 4

Texture 3

Extra sharpen 2

Extra denoise 1

v8.3.4 offers Text Prompting for Creativity 2-6

(In comparison v8.4.0-beta4 uses 6 levels for Creativity with different names and effect?

Realistic None & Subtle and Artistic Low Medium High & Max

plus only Artistic has Text Prompting)

The first set of Redefine images uses different values for Creativity and Texture with no prompts.

(Each filename includes the settings code described above and render time by stopwatch.

The times may seem random but depend on whether Gigapixel adjusts (quicker) or recomputes (slower) after setting changes)

C1100 result looks like Julia Roberts - but already shoes are changed to Californian Artisan ?

.

.

C2200 result looks like Julia Roberts

.

.

C3200 - with Creativity 3 image starts to look less like Julia Roberts - shoes gone Italian snakeskin ?

.

.

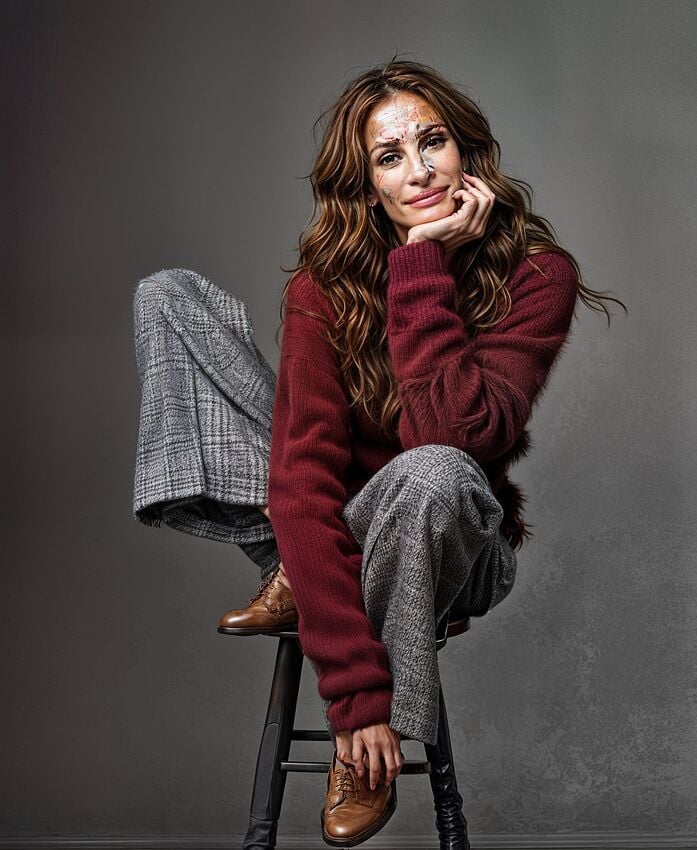

C4200 - Topaz Girl definitely appearing ? pants texture starting to breakdown - shoes + measles ?

.

.

C5200 - pants texture changed shoes very “spotty”

.

.

C6200 - grey “tartan pattern” pants gone psychedelic - shoes now have stitched toecaps

.

.

zzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzz

The next set of images use my self generated simple text prompt : " actress Julia Roberts sitting on a tall stool, wearing grey houndstooth trousers and a shaggy maroon cashmere sweater "

The addition of a prompt stabilises the facial resemblance to some degree but Julia ages as the Creativity increases ? The pants texture is probably better ? Look at the shoes for a wide degree of differences and the hair texture and colour changing.

It’s interesting how Texture setting as well as Creativity changes the results in details

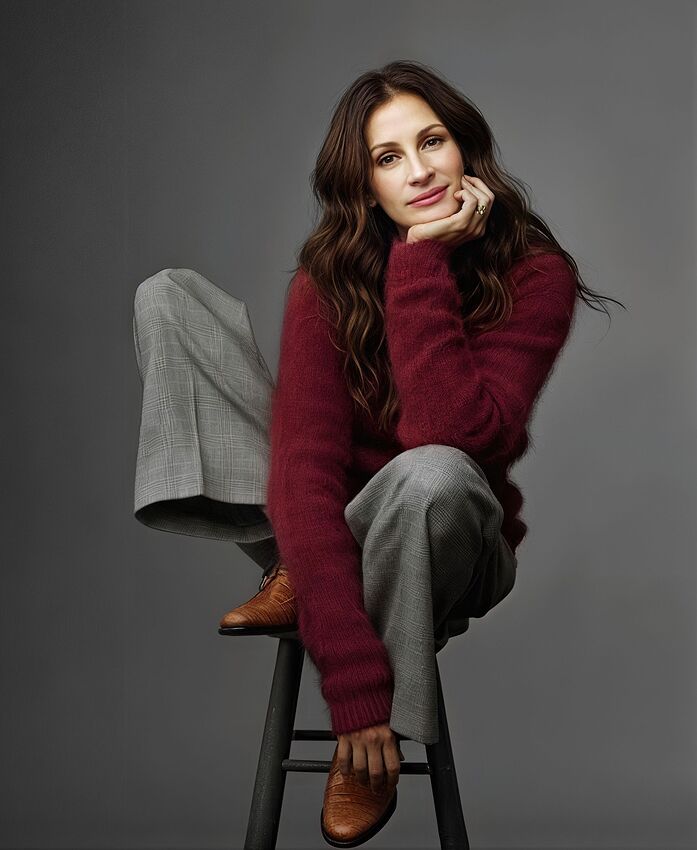

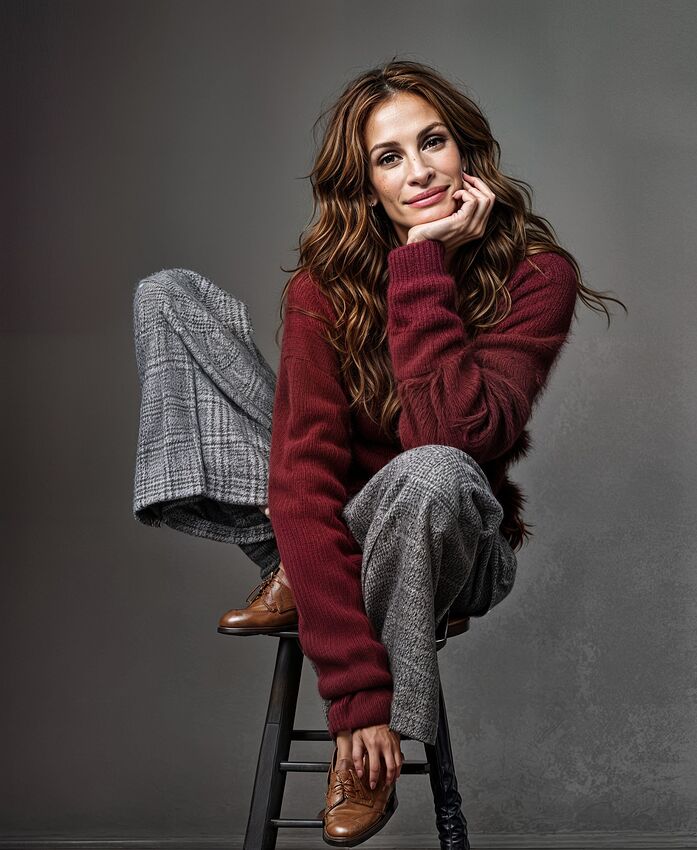

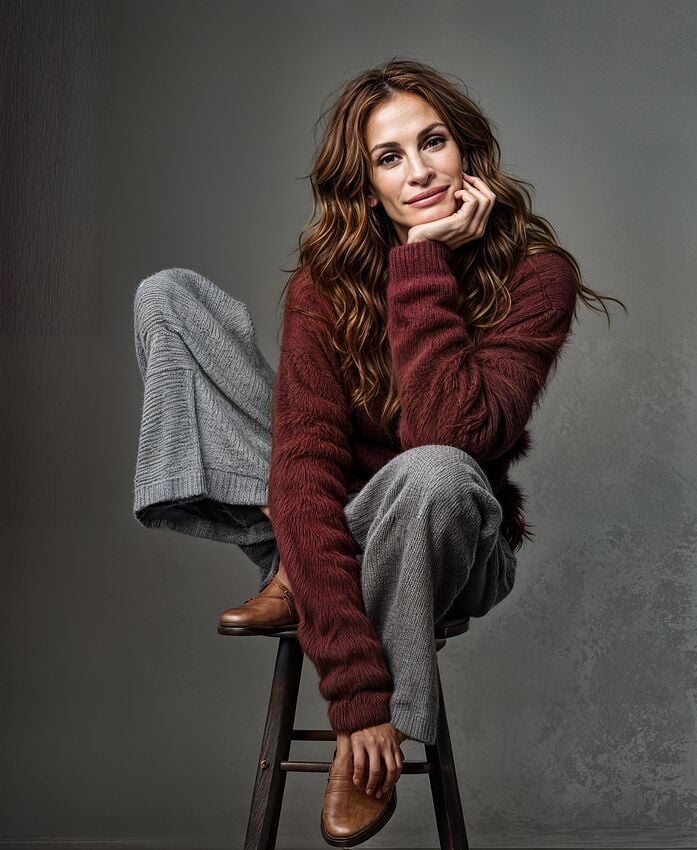

C2200 with simple text prompt

.

.

C3200 with simple text prompt

.

.

C4200 with simple text prompt

.

.

C5200 with simple text prompt –

note that with Creativity 3 the right hand stool leg starts to MELT

.

.

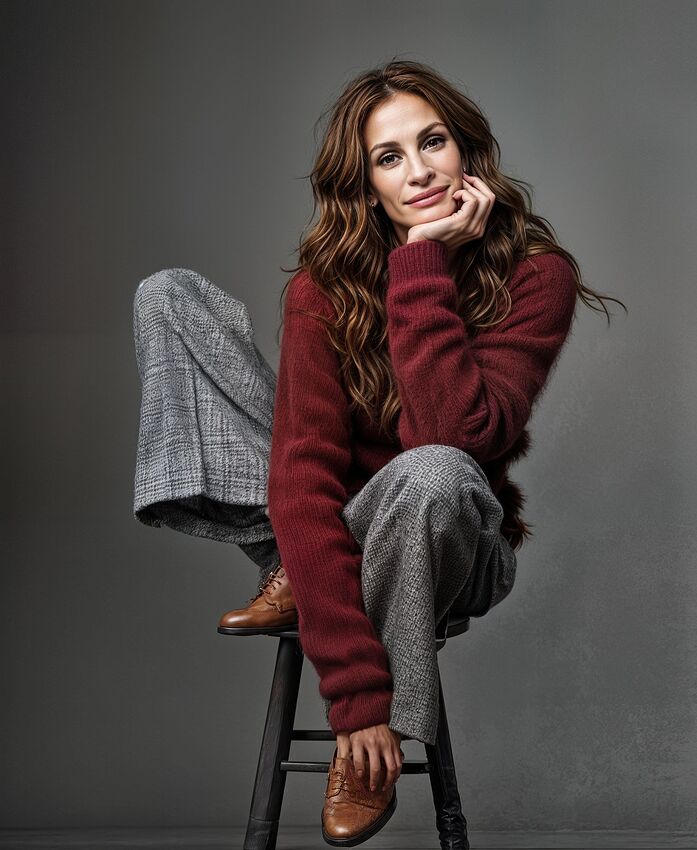

C6200 with simple text prompt

.

.

C6300 with simple text prompt

.

.

C6400 with simple text prompt

.

.

C6500 with simple text prompt - in this combination the render breaks down !

.

.

zzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzz

Then an expanded long text prompt was generated at : imageprompt.org using the option : Describe Image in Detail and changing the suggested “A woman sits …” to

" American actress Julia Roberts sits …"

American actress Julia Roberts sits on a stool, wearing a maroon sweater and gray plaid pants, in a studio portrait.

The image presents a medium shot of a seated woman against a plain, neutral gray background. The composition is simple and direct, focusing entirely on the subject. The woman sits at a slight angle, positioned slightly off-center, yet the framing is balanced. Her body language suggests a calm and collected demeanor; she is not overtly posed. Her posture appears relaxed, yet composed.

The key subject is a woman, likely in her 40s or 50s. She is light-skinned, with shoulder-length wavy brown hair. Her facial expression is calm and serene, almost contemplative. She appears to be wearing a soft, burgundy-maroon-colored, oversized sweater and gray plaid pants. The pants have a tailored, slightly loose fit. The woman’s shoes are a medium brown leather style.

The artistic style is straightforward portrait photography. The image seems to be a studio portrait, with controlled lighting and a plain backdrop to direct the viewer’s focus completely on the subject. The lighting is even, with no harsh shadows, suggesting a soft light source. The focus is sharp, and details like the texture of the clothing are evident. The colors are muted and natural, without any vibrant or intense hues. The overall style is professional and elegant.

The setting is a simple studio environment with a plain, neutral gray background. The lighting is soft and even, illuminating the woman without harsh shadows, contributing to a calm and serene atmosphere. The overall mood is relaxed, sophisticated, and professional, likely intended to convey confidence and grace.

.

.

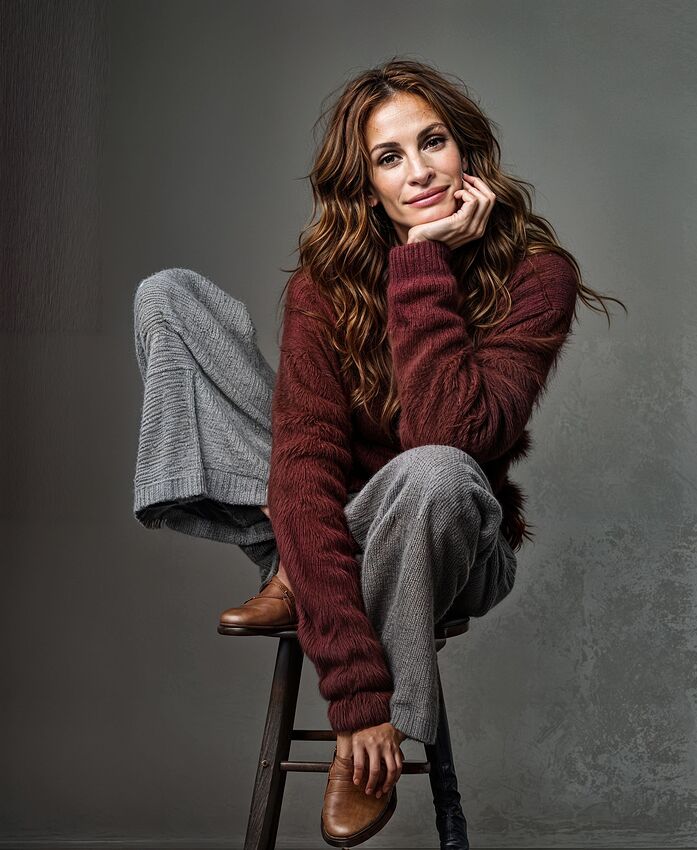

Expanded Long Text Prompt with settings C2200

.

.

.

There is no real discernable difference in using this long expanded text prompt compared with the simple Prompt. My experience suggests that a short but effective prompt does the job when starting with an existing image. Long detailed text prompts are better for AI that totally generates a picture using only text but may not be necessary for Gigapixel when starting from an existing image or photo.

zzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzz

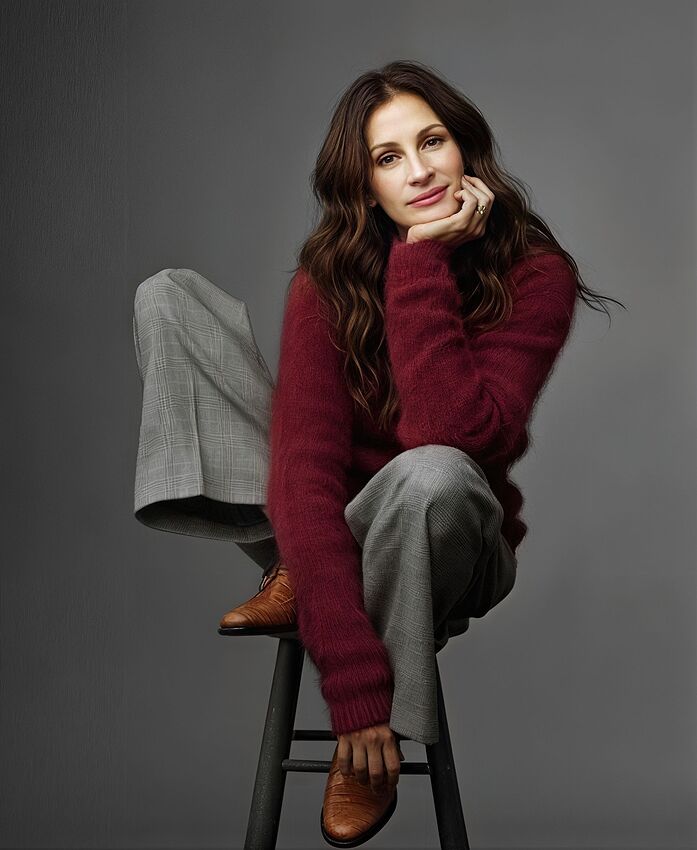

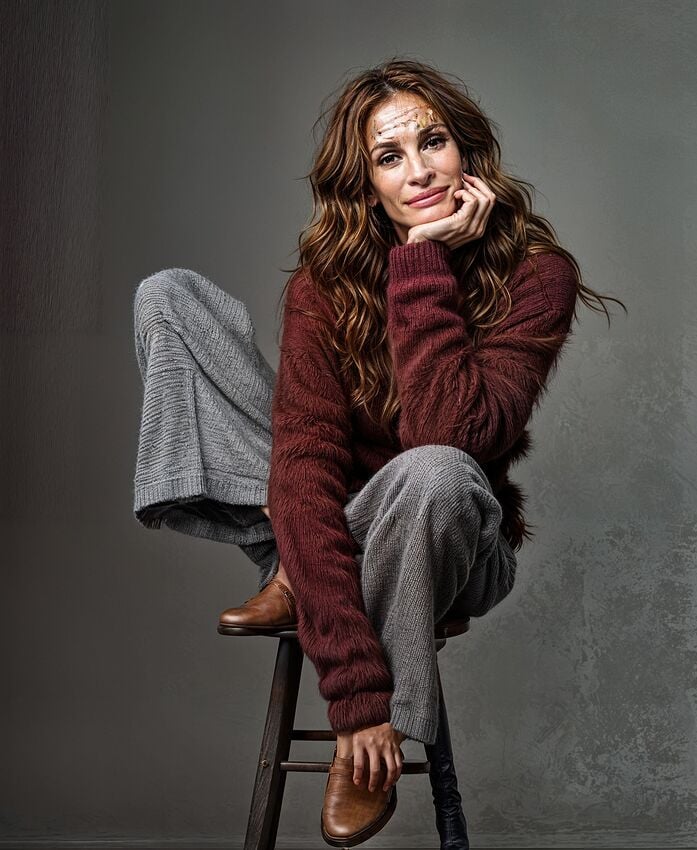

The third set of images uses a FREE ChatGPT prompt built from the starting Julia Roberts test image and asking for “A very detailed text description of the image”. A more sophisticated text prompt may be available using the Subscription ChatGPT service. Online chats say that service can accurately identify almost all celebrities and well known objects and scenes which can improve the results that it produces. The FREE service offered this : "once again I changed the offered "A woman " to "American actress Julia Roberts "

American film actress Julia Roberts sits comfortably on a tall stool against a neutral grey background, radiating a calm, confident energy. She wears a soft, textured burgundy sweater paired with loose-fitting grey plaid trousers, exuding a blend of elegance and cozy style. Her brown leather shoes add a vintage touch to her ensemble. Her long, wavy brown hair frames her relaxed face as she rests her head gently on her hand, creating an intimate and thoughtful pose. The overall composition feels warm and inviting, showcasing a stylish yet natural moment captured in a studio setting.

American film actress Julia Roberts poses gracefully while seated on a tall, dark wooden stool in a minimalist studio with a soft grey backdrop. Her posture is relaxed, with one leg bent and resting on the seat, the other hanging naturally. She is dressed in a cozy, deep burgundy fuzzy sweater that adds rich texture and warmth to the scene. Her trousers are wide-legged and tailored, featuring a subtle grey plaid pattern that contrasts nicely with the deep hue of her top. She wears polished, brown leather oxford shoes with a textured finish, peeking out from beneath her pants. Her long, brunette hair falls in soft waves around her shoulders, and she gazes gently at the camera with a serene expression. Her right hand supports her face, her fingers lightly touching her cheek, subtly highlighting a gold ring on her finger. The lighting is soft and flattering, emphasizing the texture of her clothing and the natural tones of her skin and hair. The composition and styling together evoke a timeless, effortlessly sophisticated atmosphere.

.

.

In this set there are similar differences as Creativity and Texture increases. With Texture 3 and above these changes are certainly unacceptable.

ChatGPT prompt C2200

.

.

ChatGPT prompt C3200

.

.

ChatGPT prompt C4200

.

.

ChatGPT prompt C5200

.

.

ChatGPT prompt C6200

.

.

ChatGPT prompt C6300

.

.

ChatGPT prompt C6400

.

.

ChatGPT prompt C6500 - once again this combination breaks down

zzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzz

Another variable is the sized of the final image - bigger tends to be better ?

These 4 images were upscaled 3x and if you have read this far you can probably identify and grade the differences yourself !!!

C2200

.

.

C3200

.

.

C5300

.

.

C6310

.

.

zzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzz

The final 2 images here have been done with the Cloud render option using C2200, one at 2x (2 credits) and one at 3x (3 credits). This level of Creativity and Texture was chosen as being the “best” combination in my opinion - interesting if anyone agrees or disagrees !

The 3x Cloud render is notable for the two upper corner “ghosts” which are certainly artistically creative and the two lower corner “ghost artifacts” which are not so welcome !

Cloud C2200 x2

.

.

Cloud C2200 x3

.

.

zzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzzz

My overall conclusion is that the best combination of settings isn’t always obvious and some trial and error is often needed to choose the result you like most.

I have done a lot of testing on these sets and many more on other starting images concentrating on the differences between “long or short prompts” and these sets seem to confirm that an adequate effective text prompt is analogous to “The cavalry arriving just in time!” It’s enough !

An over-long text is often extra work for nothing.

I’m sure some if not many may disagree !!!

I would welcome any comments and or recommendations for improvement as well as any necessary corrections or contrary advice you may like to offer.

Many thanks for your patience and interest if you read this far.

![]()