Hello everyone!

Today we have a very exciting update for Topaz Gigapixel. We are bringing NeuroStream and NeuroServer technology to enable our most powerful upscaling models locally for the application. In addition, we have the new High Fidelity v3 model for improving high quality images.

- NeuroStream & NeuroServer — Local Rendering for Large Models

- Wonder 2 Local Render — Photorealistic Recovery, Now Running Locally

- Wonder 3 — Smarter Recovery Across More Images

- High Fidelity v3 — Better Detail, Fewer Artifacts

You can find the installer links and the full changelog below for this version of Topaz Gigapixel. For any issues, make sure to send us an email to support@topazlabs.com

v1.2.0

Released May 7th, 2026

Windows: Download

Snapdragon: Download

Apple Silicon Mac: Download

Intel Mac: Download

NeuroStream & NeuroServer — Local Rendering for Large Models

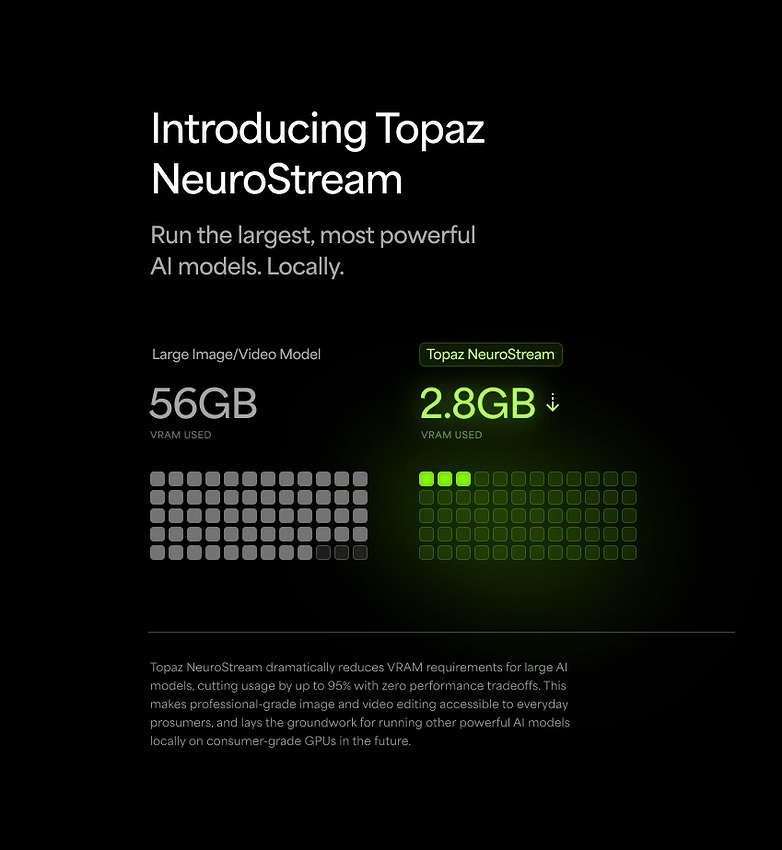

As our models have grown more powerful, so have their hardware demands. The best results were increasingly locked behind extremely powerful GPUs, limiting how many users could actually take advantage of them locally. As those requirements kept growing, we knew we needed to rethink how we build, deliver, and run these models. That rethinking led to NeuroStream.

NeuroStream is a proprietary, industry-first technology that reduces VRAM requirements by up to 95%, allowing large models like Wonder 2 and Wonder 3 to run on everyday hardware without sacrificing output quality. The only tradeoff is a minor reduction in processing speed. What once required an extremely powerful GPU accessible only on the cloud can now run on the hardware most users already have.

To use NeuroStream technology on your device we’ve built NeuroServer. This lightweight local server runs on your machine when you select a model that requires it. Topaz Gigapixel will automatically spin up NeuroServer in the background, load the model, and process your images. When it’s no longer needed, the server shuts down on its own.

This opens a new chapter for what’s possible in Topaz Gigapixel. NeuroStream removes the biggest barrier to running powerful models locally, and we’re already working on bringing more models to the application using this technology. We’re excited about what’s ahead as we break down barriers for AI processing.

Wonder 2 Local Render — Photorealistic Recovery, Now Running Locally

Wonder 2 quickly became a fan favorite after its launch, delivering artifact-free enhancements, reliable text preservation, and our most powerful realistic recovery to date. The catch was that Wonder 2’s resource demands meant it could only run in the cloud.

That changes today.

With the introduction of NeuroStream and NeuroServer, we’ve built the infrastructure to bring Wonder 2 to your local machine. These technologies allow larger, more demanding models to run directly on your device and Wonder 2 is the first to take advantage of them.

Local rendering is currently supported on:

- NVIDIA GPUs: 8GB VRAM or more

- AMD GPUs: 8GB VRAM or more

- Apple Silicon: 16GB RAM, macOS 14 or later

Intel Mac and Windows ARM (Snapdragon) users will continue to use cloud render for Wonder 2. Cloud render supports output up to 100MP.

For supported hardware, you can choose between local and cloud render depending on your preference. The two models have a slightly different character: the local model renders a bit sharper and less grainy than the cloud version, with particularly strong handling of faces and skin detail. If portraits are a big part of your workflow, it’s worth trying both and seeing which look you prefer.

With local rendering now available, you can process as many images as you want directly on your device. No upload times, just Wonder 2 running locally.

Wonder 3 — Smarter Recovery Across More Images

Wonder 2 set a high bar for general enhancement, but it was not yet at the level we were hoping for recovery. Low detail images, whether blurry, soft focus, or false resolution, saw limited improvements after processing, hitting the limits of what Wonder 2 could handle. Where Wonder 1 may have over-processed images and made them look artificial, it felt like Wonder 2 was not doing enough to improve images. It fell short, so we continued improving it to build the next version.

We tested Wonder 3 and quickly found it to be an extremely capable model. It handles images of all sizes and quality. The low quality images that Wonder 2 did not improve enough were easily recovered with Wonder 3, with an emphasis on realism. The new model produces high quality details that previous Wonder models struggled with. Wonder 3 handles difficult false resolution cases extremely well with no extra controls needed. You get better results on a wider range of images without having to intervene.

On this portrait, the skin and hair textures are photorealistic. Usually, AI models create smooth skin and hair with repetitive structure. Here, the hair seems to weave naturally around itself and fall down as expected. The skin textures are also imperfect as you would expect.

Here’s another example with wildlife imagery. The eyes and feathers where we focus our attention is sharp. The feathers have a blend of softness and detail for a convincing result.

The model has two controls. Scale sets your target output size. Enhancement strength controls how generative the model is, giving you more powerful recovery when the image calls for it. Strength is paired with our image detail auto detection: when a low detail image is detected, strength is set automatically to produce the best result. For most images you won’t need to touch it.

Local rendering is currently supported on:

- NVIDIA GPUs: 8GB VRAM or more

- AMD GPUs: 8GB VRAM or more

- Apple Silicon: 16GB RAM, macOS 14 or later

Intel Mac and Windows ARM (Snapdragon) users will continue to use cloud render for Wonder 2. Cloud render supports output up to 100MP.

We want to hear how it performs on your images, especially at 1x and 2x and on images that have given Wonder 2 trouble in the past. Drop your results and feedback in this thread.

High Fidelity v3 — Better Detail, Fewer Artifacts

High Fidelity is designed for images that are already in good shape. Where other models focus on recovery, High Fidelity’s job is to enhance what’s already there: sharper detail, cleaner textures, and upscaled output that holds up at full resolution. We found that v2 had shortcomings with high quality images: removing details (like noise) in the original image that we may want to keep, creating repeating patterns instead of natural textures, and sharpening important details.

The top image is from v2, and you can see it tried to denoise the image when it should not have, leaving the sky smooth but the flag noisy. The details on the flag are slightly blurry.

The output from v3 treats the noise as an important part of the image, keeping it consistent. It also sharpens the details on the flag for cleaner lines and stars in better focus.

The core change in v3 is a two-part architecture. Before upscaling begins, an analysis model reads the image, both as a whole and at the block level, to build a detailed understanding of its content. The upscaling model then uses that context to produce output that stays true to the original while generating realistic detail even at larger upscale values. Earlier models would struggle here, as small structures get magnified significantly and realistic detail becomes much harder to maintain.

This approach also meaningfully reduces AI artifacts. Two areas we’re especially happy with: natural scenes and skin. Leaves, grass, and similar patterns used to come out repetitive and visibly generated. v3 produces realistic variation. Skin textures, which previously came out either too smooth or too harsh, now retain their natural softness.

Beyond artifact reduction, v3 is better at preserving and enhancing textures that are already present in the image. Rather than softening fine detail during upscaling, it sharpens it further, producing a crispness that previous High Fidelity models couldn’t match.

High Fidelity v3 is best suited for images that are already high resolution and clearly detailed. Raw files and images above 6MP are ideal candidates. If the source image has strong existing detail, v3 will enhance it further rather than approximate it. For lower resolution or lower quality images, Wonder 3 is the better starting point.

To use the new model, select the High Fidelity model then pick v3 from the dropdown.

We want to hear how it performs on your images, especially on subjects where v2 produced repetitive textures or visible artifacts. Drop your results and feedback in this thread.

Known Issues

- High fidelity v3 default strength is set too high for first run. Please reduce the strength to 50 as a starting point.

Changelog

- Add NeuroServer for running large models locally on NVIDIA, AMD, and Apple SIlicon devices

- Add Wonder 2 local render through Neuroserver

- Add Wonder 3 local render through Neuroserver

- Add Wonder 3 cloud render

- Add Upscale - High Fidelity v3 model for local render

- Fixed Redefine creativity defaulting back to Low

- Fixed crop restricting max scale incorrectly

- Fixed shortcuts activating on the “Get help” dialog

- Mac installers are now split between x86_64 (Intel) vs arm64 (Apple silicon)

Lingyu Kong

Technical Product Manager

Image AI