I would like to see and learn how people pre process their video before VEIA.

my strategies are various, sometimes something work, sometimes not.

DEINTERLACE

Deinterlace work well or worst for a tons of reasons, then i try different scene by scene.

i start with deinterlace using two different ways :

- AfterEffects and old redgiant deinterlace plugins (not work on recent 2020cc i keep old 2017 after to run it)

- external free solution by vaporsinth, i use it under mac, i follow a great package and tutorial from a skilled user .

here you can find tutorial to manage it.

Blackmagic Forum • View topic - VapourSynth + QTGMC Deinterlace + Hybrid FAQ for macOS

it exist also under win, you must translate installation from mac to win.

Second solution give you a 50fps video (doubling frame to avoid risk to waste data from fields, that i sometimes blend to 25 with Resolve optical flow (far better then adobe solution, far faster then it).

Sharpening halo and others artifact

i need here strategies if someone know how to remove without waste too much video i would like to learn. I found different videos and plugins but most reduce too much the small dectails that are recognized like halo defects.

sometimes i had good result to Up smowly, from 576p to 480p (smalling halo), then 480p to 720p, 720p to 1080p, 1080p to 2160p, 2160 to 1080p final.

SIZE

i observed that sometimes reducing resolution of clip allow VEIA to work better, some clips at original 720x 576 (i’m in pal land) don’t give me good result, scaling them to 640 x 480, or 640 x 400 for 16:9 shooting give me better result.

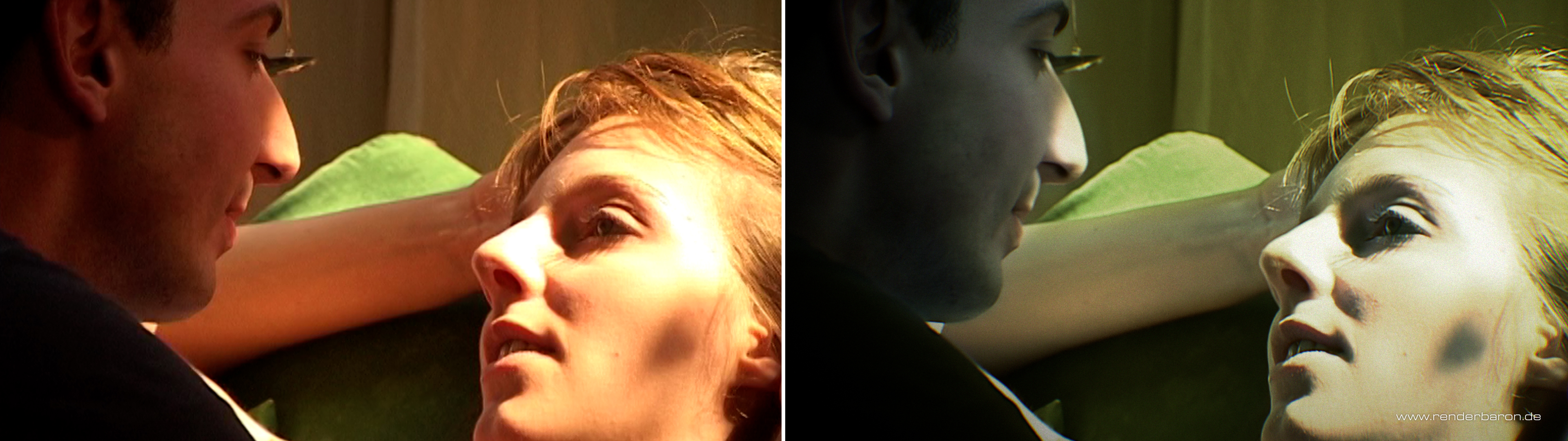

Color pre process

most of old video codec saturated too much video, i reduce saturation and vibrance to allow VEIA to see small dectails in saturated pixels.

AI Up

i observed that sometimes i obtain good result from scaling up and down shooting.

from 640 to 1920, from 1920 to UHD, then later again down from uhd to FHD for final master, be cause it give me more pleaseable result.

please share your thought and your strategies.