```

Topaz Video v1.1.0

System Information

OS: Windows v11.25

CPU: AMD Ryzen Threadripper PRO 7995WX 96-Cores 511.5 GB

GPU: NVIDIA RTX PRO 6000 Blackwell Workstation Edition 94.336 GB

GPU: NVIDIA RTX PRO 6000 Blackwell Workstation Edition 94.336 GB

Processing Settings

device: 0.1 vram: 1 instances: 1

Input Resolution: 1920x1080

Benchmark Results

Artemis 1X: 51.01 fps 2X: 18.78 fps 4X: 05.22 fps

Iris 1X: 55.82 fps 2X: 20.82 fps 4X: 05.86 fps

Proteus 1X: 52.53 fps 2X: 20.97 fps 4X: 05.57 fps

Gaia 1X: 36.60 fps 2X: 20.41 fps 4X: 05.21 fps

Nyx 1X: 35.80 fps 2X: 27.13 fps

Nyx Fast 1X: 51.13 fps

Nyx XL 1X: 31.48 fps

Rhea 4X: 05.29 fps

RXL 4X: 06.15 fps

Hyperion HDR 1X: 10.71 fps

4X Slowmo Apollo: 39.90 fps APFast: 74.41 fps Chronos: 47.65 fps CHFast: 38.68 fps

16X Slowmo Aion: 50.22 fps

```

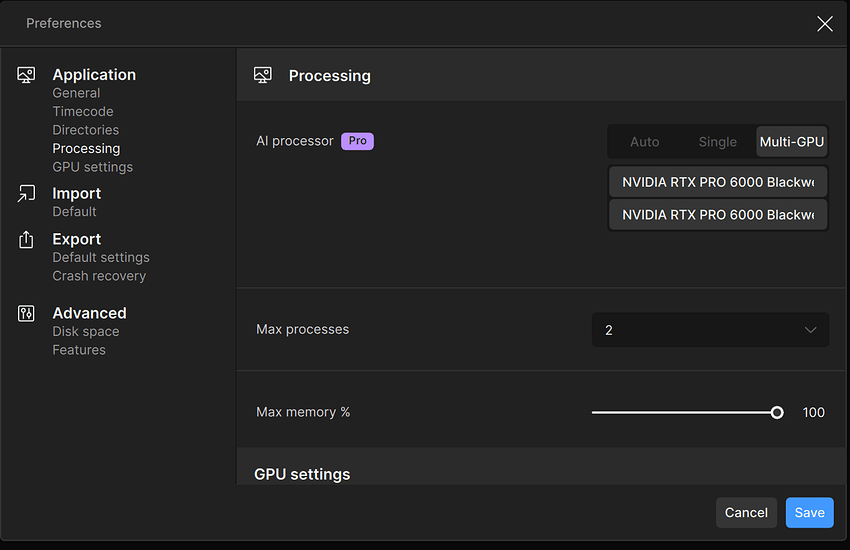

Dual RTX 6000 BlackWell GPUS (Multi GPU BenchMark)

```

Topaz Video v1.1.0

System Information

OS: Windows v11.25

CPU: AMD Ryzen Threadripper PRO 7995WX 96-Cores 511.5 GB

GPU: NVIDIA RTX PRO 6000 Blackwell Workstation Edition 94.336 GB

GPU: NVIDIA RTX PRO 6000 Blackwell Workstation Edition 94.336 GB

Processing Settings

device: -2 vram: 1 instances: 1

Input Resolution: 1920x1080

Benchmark Results

Artemis 1X: 48.68 fps 2X: 19.99 fps 4X: 05.02 fps

Iris 1X: 53.07 fps 2X: 21.28 fps 4X: 05.49 fps

Proteus 1X: 55.72 fps 2X: 20.92 fps 4X: 05.45 fps

Gaia 1X: 20.02 fps 2X: 14.25 fps 4X: 05.24 fps

Nyx 1X: 31.03 fps 2X: 22.40 fps

Nyx Fast 1X: 50.51 fps

Nyx XL 1X: 29.85 fps

Rhea 4X: 05.22 fps

RXL 4X: 05.22 fps

Hyperion HDR 1X: 15.32 fps

4X Slowmo Apollo: 37.09 fps APFast: 65.73 fps Chronos: 41.47 fps CHFast: 41.36 fps

16X Slowmo Aion: 38.45 fps

```

(Single GPU Test)

I have a genuine question. In earlier versions (before the PRO version was released), using multiple GPUs resulted in almost a 2× performance improvement. However, in recent versions, as shown in the benchmarking above, there seems to be little to no performance difference at all.

This may vary depending on the model, but for existing models, shouldn’t the performance remain the same?

Of course, if you run multiple scenes on separate GPUs, you can physically achieve nearly double the throughput when handling many scenes. But when it comes to running inference on a single scene using multiple GPUs, it no longer seems to provide any meaningful benefit.