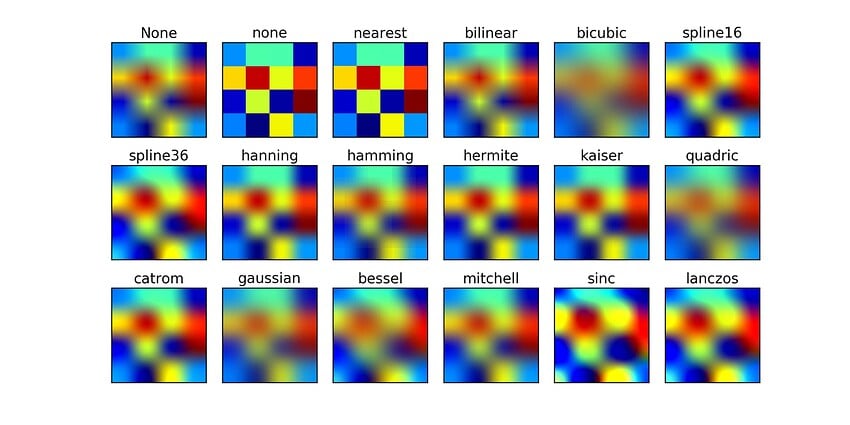

Downscaling - like Upscaling is best chosen indivdually on the footage and the desired output. There is no “best” scaler. So VEAI either includes a very big set of different input/output scalers - or goes for the best compsomise (or a smaller set of options)…

Related to this topic is an issue mentioned a lot of times elsewhere:

The order of which prescaling/processing - inference (AI treatmend) - output scaling … is done…

There are many cases where the combination of the above matters and influences the outcome a lot. And at the end, its all a matter of personal taste.

Example: Having a very blurry footage from a TV_Sat recording that was originally recorded on a CCTV Camera at a TV-station, than upscaled by whatever the person at the TV-station had at hand, then squeezed to a 2:1 ratio for SAT-Deployment… (VERY common case for “older TV Shows” still boradcasted today over “non 1080p SAT”.

In this case, the Original resolution is far lower than what is broadcasted, the “optical resolution” is lower than the amount of pixels present. To cope with that, the image often is sharpened to counter-effect the blurrieness before encoding prior to broadcasting.

Here, a process qeue like this one is often best:

- scale the image back down to the original optical resolution

- try to get rid of the oversharpening

- the run it through VEAI

- then correct for the 2:1 PAR

At the moment, Step 1 is not possible in VEAI and step 4 is internaly set before step 3. Getting rid of over sharpening can be adressed via the de-halo artemis models in some cases, in others external filtering is needed.

lets leave out the sharpening issue and not even get started on de-interlacing., This still leaves the lack of indivudally scale/resize pre- and post inside VEAI. So the result will be a picture with heavily stretched pixels horizontally and a FAR less optimal result compared to having access to the order of processing…

Lomg story short - this is only one example - but we could take a look at hundreds more… The more complex the possibilities to tamper with the footage get - the less “beginner friendly” the software will get. It always will come down to some conpromise.

I´d personaly take another route n development: Make a sketch of what the software is going to be capable of, which user group will be adressed and which “type of user experience” the software is aiming for - THEN implement these features as planed on some road map.

At the moment, the development of VEAI takes another approach: Build whats possible - aks the community about wishes and then go from there, depending on what can be done in a meaningfull time, producing a short release time to “keep it interesting”…

IMHO, a good compromise would be to have two sections inside VEAI:

prefilter

and postfiltering

and throw a handfull of options in each of them to deal with resizing, cropping, sime basic colour/hue/brightness filters and maybe a denoise/etc… option…

This should keep it simple enough and offer a lot more individual processing, while still being “easy” to develop and implement…

The above mentioned downscaler “perceptual based downscaling” could be one of the downscale options.