To me DXO one looks more natural.

That’s right.

I like how Gigapixel corrects the focus together with Denoise, since we mostly shoot between f2.0 and f1.4 for reportage. So the focus can be a bit off.

But basically Deep Prime looks like it should.

I’m busy trying to find the sweet spot between denoising and enlarging.

The goal is to shoot normally, with Auto ISO + Realtime Subject Tracking.

The difference is that the images are all the same size due to Gigapixel.

Which was not the case in the past because cropping could take you below 4K as a target size.

Image is taken from a Wedding Reportage.

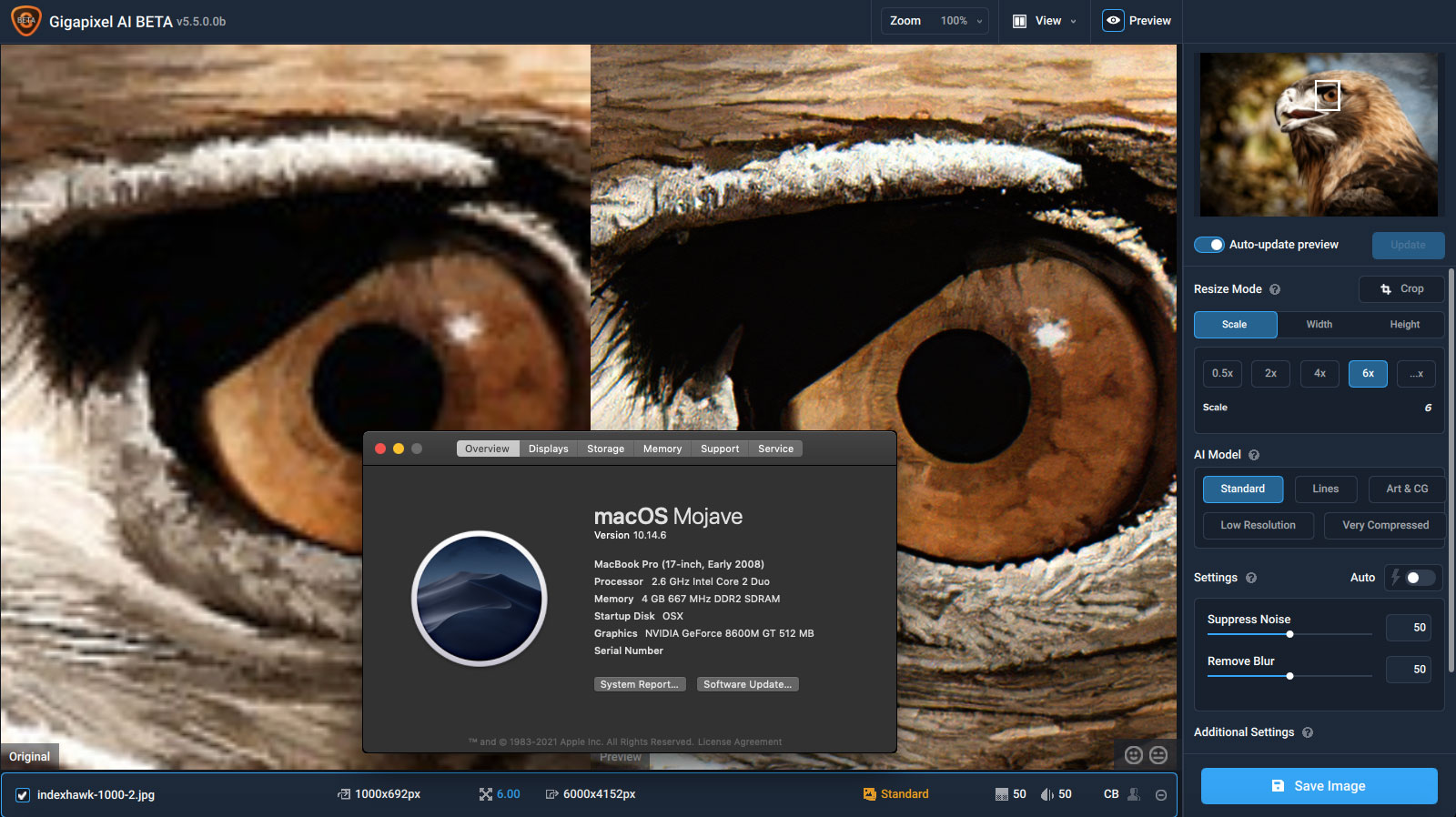

100% Crop (4K) - Capture One + Denoise 3.0.3 + Gigapixel 5.5.0

(2019) 100% Crop (4K) - Capture One + AI Clear - Custom Workflow in PS.

(4K) - Capture One + Denoise 3.0.3 + Gigapixel 5.5.0

(2019) - Capture One + AI Clear - Custom Workflow in PS.

Custom Workflow in PS.

Duplicate layer → Denoise 2019, AI Clear High → Select sharp area —> Invert selection → Layer from copy (50% opacity) —> Select subject → Invert selection —> Layer from copy (50% opacity).

The goal was to reduce the opacity on the focus area and the subject by 50% due to the artifacts that AI Clear (High) was creating.

This way I was able to denoise the background very well but keep details in the important areas.

And since there was a line artifact at this time, a layer was converted into a luminance layer using the layer function to make the artifact less noticable.

But fortunately that is no longer the case with the Low Light Model.

And also take a look at the Speed.

Photoshop Custom Denoise = 20 sec per image.

Capture One 21 = (0,7 sec/image) - Denoise Low Light = 3 to 4 sec / image - Gigapixel = 1 to 4 sec / image. = 9 sec / image worst case + Better image Quality and all images have the same size.

Why do you use Denoise for such pictures?

If I have to look at up to 1600 images, one by one, which one is noisy and which one is not, it would take days.

Big request to the developers! If you created a compilation of one version of the program and it suffers a defeat, then after fixing it, you must definitely change the version of the last issue so that there is no confusion and confusion which version of the program was fixed and which was not. This concerns the correct algorithm for all programmers who release their products. Even for myself, then it is very difficult to figure out which version with the same number was working and which was not. Therefore, do not skimp on the version of the program development numbers, even if you fixed the test version, be sure to add the last number, fortunately you can write as many of them as you like and this is not shameful … Just how you upload versions with the same numbers to download, now it is not clear which one version now for download, corrected or corrected again, but with the same number … If the previous version was not working, then just add the number (5.5.00123) and it will be clear. I really appreciate and understand the work of programmers, because I know what it is. Thank you for understanding!

The results of the CPU option look better, richer in detail, more reasonably enhanced, and stable in operation. But there is a issue, I see that the total CPU utilization does not exceed 50% in any case, and the utilization of each thread varies, Sorry, I don’t have permission to post pictures.

For those wanting to download all of the models and circumvent downloading the optimized models when they’re used within the application, you can download them here:

Windows | Mac

(links updated 2021-06-21)

To load the models into Gigapixel, do the following:

- Make sure Gigapixel is closed.

- Unzip the contents of the download that corresponds with your operating system.

- Navigate to C:\ProgramData\Topaz Labs LLC\Topaz Gigapixel AI\tgrc on Windows, or /Library/Application Support/Topaz Labs LLC/Topaz Gigapixel AI/tgrc on Mac

- Copy the contents inside of the unzipped archive into the folder in step 3. Make sure that you don’t copy the folder itself over - you want each model (EG ghc-v1-fp32-192x192-tf.tz) to be directly in the tgrc folder and not in a sub-folder.

- Open Gigapixel AI and verify that “Downloading Optimized Models” does not show up.

Note that to navigate to the Gigapixel AI models folder, you’ll need to have “Show Hidden Folders and Files” selected for Finder in Mac or File Explorer in Windows, as both ProgramData and Library are hidden folders by default.

Note to beta testers: You can do the same for the beta, just replace Topaz Gigapixel AI with Topaz Gigapixel AI BETA in the path given in step 3.

Thanks Taylor. I just have questions on my side, and a problem :

- The problem : When I download the model, the engine says “downloading…” but after that it’s stuck at 2%, and I don’t know if it’s blocked because of a bug, or actually downloading.

- Old versions showed that calculations 100% CPU were better than other methods, like OpenVino or GPU. And I just discovered that the optimized models I downloaded are just for OpenVino.

So, except if you assure me that OpenVino is strictly identical to CPU only, how can I use GAI and be sure that calculations are done by CPU only ? (especially because I have a Ryzen CPU, not an Intel one).

Yes, Spending around 2mn instead of 14 like before is better, but I want the best quality, so I want to be sure to have it.

To say it short : I want to have control on “models” I use. Please. ![]() We can choose to not use GPU, thanks, I want to be sure tu use CPU only if the result is better.

We can choose to not use GPU, thanks, I want to be sure tu use CPU only if the result is better.

It’s downloading. It’s a blocking call so it might look like it’s not doing anything but it is.

If you want to strictly use CPU, you can go into your models folder (tgrc folder, if you need help finding this lmk), then delete all of the files that have -ox.tz, -ov.tz, -ml.tz extensions. Make sure you have the -tf.tz files in there though.

Open the application and turn on “Disable Model Downloading” in the Preferences. You can now process and it’ll use the CPU models (based on TensorFlow). You can ensure it’s using them by going to one of your logs and checking the output, it should say something like “using gmp-something-tf.tz” or the like.

We plan on adding this when we add the framework that allows greater user control over what the engine uses / downloads in the future, so this is just a workaround for now.

Il seems this beta version also has a problem. I could install it all right (except an annoying message about another installer stil running, which I ignored). I can work on an image, the preview is functional and looks fine, but I cannot save the result. I get the saving progession bar but, when it’s over, the resulting file is not to be found! I tryed to change the filename, the target directory, but no way. Strange. I am running Mojave on an Imac.

manual install worked for catalina. curious about the unzipped models download. Do i put the whole models folder in the tgrc folder or just the contents inside the folder?

The contents. I’ll make a note of that in the instructions, thanks!

I did that at first, but the engine crashed immediately. But I deleted -tf.tz too, I think, so…

Thanks for your help, I’ll do this.

great thanks! also i should replace the existing files correct?

You can, but you don’t have to.

Why’s the zip file 15GB big?

Because the models are optimized for multiple different kinds of hardware combinations. It’s easier and safer to download all of them instead of trying to guess at your hardware combo the application might use (which could cause unforeseen problems we’re not accounting for). It’s the whole reason we moved to in-app downloadable models ![]()

Taylor,

Is my 2012 iMac with 2.7 GHz Quad-Core Intel Core i5 processor and 16 GB 1600 MHz DDR3 of RAM running Catalina 10.15.7 “modern” enough for the 5.5.0 upgrade? Right now, Gigapixel is running fine on my system. I don’t want to install an upgrade that will degrade performance. Thanks! ~Michael

It definitely is - you may want to wait until 5.1.1 gets released early next week however, as it has a few stability improvements for almost exactly your system spec.

Excellent. Thank you!