Hi Topaz Video,

I am upscaling high quality 4K visual FX videos based on quality photographic resources [EDIT: and computer generated resources] with computer visual FX applied. (I am upscaling them to 6K so that I can use a trick moving a 4K selection window within the 6K ones to break up the symmetry and applying additional FX and layering etc. for a final video that is 4K).

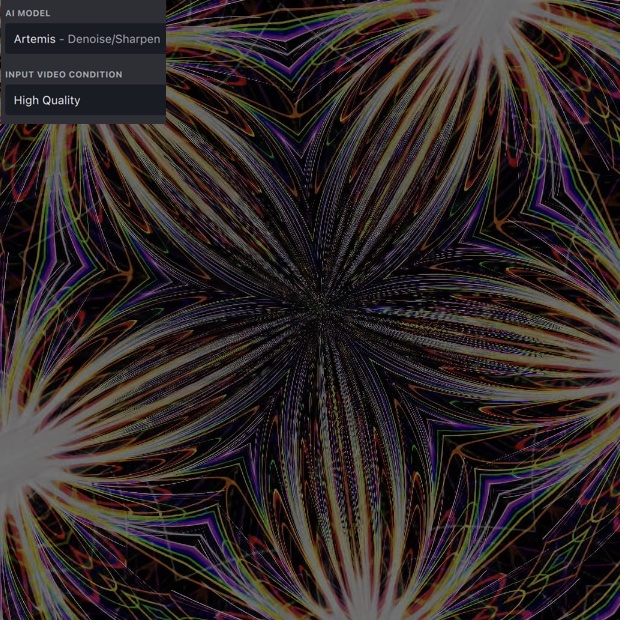

Some of the highly symmetrical FX give rise to distinct and distracting pixelation in the centre. For example, the classic Kaleidoscope type FX. Lines get “stretched out” into pixels with gaps. It is noticeable in stills, but it is more prominent when the video is driven with parametrics, you can see the pixel dots streaming into or out of the centre of the video, and there is aliasing.

I’ve attached a small portion of a still to show the typical pixelation [EDIT: This example is from a fully computer generated resource, but a similar issue arises when circular symmetry FX are applied to photographic resources].

I’d welcome any advice on which Topaz Video AI settings and algorithms I can use to help reduce such pixelation while I upscale. I do have lots of other video tools I can use, but I’d like to try to tackle them first with Topaz “upstream” while upscaling to 6K to gain any benefit before further application of FX.